Evaluation of the quality of AI-generated translation – locatheart’s case study

It has been a while since we last reflected on the topic of generative artificial intelligence (GenAI) on locatheart’s website. In the meantime, many aspects of human lives have become permeated with content created entirely or largely with tools such as ChatGPT, Claude, Grok, or Perplexity.

Translations are not an exception – for example, YouTube often enables live translation of videos (using subtitles or dubbing) with Google Translate, which itself, to a significant extent, operates similarly to AI models. Nonetheless, it seems that nowadays slightly more people use strictly AI-based tools for ad-hoc translations – and not software such as Google Translate or DeepL.

According to StatCoutner’s data for March 2026, the vast majority of netizens use, first and foremost, OpenAI’s chatbot – ChatGPT. What’s more, the tech giant itself states that among the 900 million weekly active users there are ca. 50 million who own paid accounts (5.6%).

Based on these pieces of information, we decided to test the free version of the most widespread AI service – ChatGPT. The model used was GPT-5.3.

In today’s article, we’re going to find out how the most popular GenAI model currently handles translations and answer an important question: “How to evaluate the quality of AI-generated translation?”

Task 1

The first text that we asked the AI tool to translate was a case study article from locatheart’s website: Football game localisation into Polish. In the initial prompt, we requested that ChatGPT render the pasted text into Polish.

Superficially, the resulting translation appears to be of rather good quality. ChatGPT doesn’t translate “word-for-word”, uses contextually fitting expressions, its style is generally correct, and the machine-translated article reads quite well.

Even so, the relatively short text that ChatGPT generated included several errors worth mentioning, as they are good examples of “systematic” flaws of AI tools in the field of translation.

| Input text (EN) | AI translation (PL) | LAH’s comment |

| Not long before UEFA Euro 2024 | Niedługo przed mistrzostwami UEFA Euro 2024 | The translation is technically and terminologically fine; however, it sounds somewhat “corporate” – as if using the tournament’s official name (“UEFA Euro 2024”), which is natural in English but not necessarily in Polish, was mandatory. “Niedługo przed mistrzostwami Europy w piłce nożnej” (lit. “Not long before European football championship”) or “Niedługo przed Euro 2024” (“Not long before Euro 2024”) would be optimal solutions. |

| Do you want to localise gaming content? | Czy chcesz zlokalizować treści gamingowe? | This particular question is a CTA – meaning it must be catchy. In Polish, questions formed with the word “czy” are usually formal-sounding, so dropping it would be more adequate; additionally, it can be argued that the adjective “gamingowe” relates only to video games. |

| What’s more, it can be trusted when it comes to the correct spelling of players’ or clubs’ names | Co więcej, można jej zaufać w kwestii poprawnej pisowni nazw zawodników czy klubów | Definitely the biggest error – the word “nazw” is used to translate “names”; however, the Polish word doesn’t refer to people, in whose case it should be e.g. “imion i nazwisk” (“first and last names”). Meanwhile, in English, the same noun works for both players and clubs. |

| whenever there were any doubts or questions, the translator was ready to answer them | w razie wątpliwości tłumacz był gotowy do udzielenia wyjaśnień | The sense was maintained (lit. “in case of doubts, the translator was ready to provide explanations”) but the fragment reads very formally – you could think it is about explaining your tax declaration to the revenue. |

| our project manager received general remarks concerning potential reception of the game by Polish audiences | kierownik projektu otrzymał także ogólne uwagi dotyczące potencjalnego odbioru gry przez polskich użytkowników | The PM referred to in the sentence is a woman; obviously, neither AI nor a human translator (outside of LAH) needs to know this, but one can always ask to make sure – which the machine didn’t do. |

| It was noticed that the quiz features way too many questions about British footballers | Zauważono, że quiz zawiera zdecydowanie zbyt wiele pytań dotyczących brytyjskich piłkarzy | Impersonal forms, such as “zauważono”, are seen as marked outside of formal contexts. |

Is the AI-translated content of locatheart’s article understandable and conveys relevant information? Yes. Would we publish it online unrevised? Definitely not. If, for whatever theoretical reason, we were forced to use AI translation, we would certainly task a qualified locatheart employee with revision and proofreading.

Someone could raise an objection here and say that the AI-generated translation can further be polished with the use of ChatGPT, too. Let’s, then, see what happens in this scenario. In both cases, we asked ChatGPT to review the translation which it generated earlier (in a separate instance).

Sadly, the AI hasn’t corrected the part about “players’ names”.

Also in a new conversation, we asked for revision of the full translation created by the AI. The fragment in question currently looks as follows:

The text was changed but one error was swapped for another. It now reads, “nazwiskami (…) klubów” (“clubs’ surnames”), which is arguably even more jarring.

If it weren’t for the fact that LAH employees are native speakers of Polish – and English is their main foreign language – there’d be no way for us to realise that the AI’s translation is flawed. Unfortunately, even ordering ChatGPT to review its own translation doesn’t fix the problem. There is one key takeaway: a human is and likely always will be indispensable in the translation pipeline in order to reduce the number of errors to the absolute minimum. AI tools can’t be trusted, at least not completely.

Task 2

For the second test, we asked ChatGPT to translate another article from locatheart’s blog. In this case, the target text contains fewer mistakes than previously, but one part is worth quoting, as it demonstrates the importance of natural style:

EN (original text): The era of copy-pasting is falling into oblivion. And no one will miss it! After all, this method is highly monotonous and involves the risk of making simple mistakes (such as copying the content only partially, or mixing up different language versions).

PL (AI-generated translation): Czasy kopiowania i wklejania powoli odchodzą do lamusa – i nikt nie będzie za nimi tęsknił! Metoda ta jest bowiem bardzo monotonna i wiąże się z ryzykiem prostych błędów (np. częściowego skopiowania treści lub pomylenia wersji językowych).

While we need to praise the elegant rendering of “falling into oblivion” as “odchodzą do lamusa” or merging two original sentences with a dash, the phrase “częściowe skopiowanie treści” (“partially copying the content”) isn’t fitting. It doesn’t accurately convey the meaning of “only partially” – it would be much better to phrase it as “skopiowania treści jedynie częściowo” (“copying the content only partially”) or even “pominięcia części tekstu przy kopiowaniu” (“omitting part of the content while copying”).

Task 3

The previous two examples came from rather general texts – as it turns out, AI makes even more serious mistakes when translating an article about farming.

We looked at a study summary concerning a fertiliser, taken from its manufacturer’s website.

A significant error appears already at the beginning of the ChatGPT-generated translation. The English original reads, “In these results, Polysulphate increased the yield of oilseed rape by up to 33%, between 200 kg/ha and 1.15 t/ha (T2 gave the best results).”

The AI-translated Polish version reads, “W przedstawionych wynikach Polysulphate zwiększył plon rzepaku ozimego nawet o 33%, tj. od 200 kg/ha do 1,15 t/ha (najlepsze wyniki uzyskano w wariancie T2).” (lit. “In the presented results, Polysulphate increased the winter rapeseed yield even by 33%, i.e., from 200 kg/ha to 1.15 t/ha (best results were observed in variant T2).”)

Depending on interpretation, the translation can be highly misleading – you could come to the conclusion that the initial yield was 200 kg/ha, and the final yield equalled as much as 1.15 t/ha. This wouldn’t be a 33% growth – but an almost 500% one! The meaning here is, on the contrary, that the yield increase was between 200 kg/ha (a “modest” improvement) and 1.15 ha, where the latter is a 1/3 growth when compared to the control.

The AI-translated part is very unclear at best and seriously erroneous at worst.

For the sake of completeness, we might add that in a few places ChatGPT provided the English abbreviation for winter oilseed rape (WOSR), which isn’t used in Polish agricultural jargon.

Task 4

The locatheart translation agency often carries out projects of clients who publish their tabletop games in new markets (mostly card and board games). No wonder, then, that our analysis had to include a fragment related to this topic – an abbreviated version of Dungeons & Dragons rules posted on Reddit. (We entered the whole post, beginning with “** General Gameplay **”, into the chatbot.)

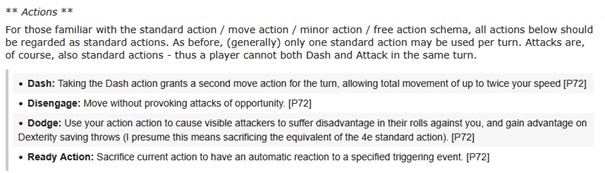

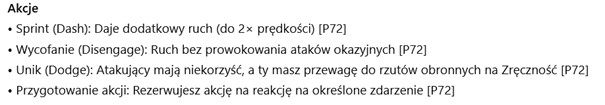

While the terminology used or the understanding of the quite technical description of rules was correct, ChatGPT totally ignored whole parts of the text. The excerpts in question:

EN:

PL (AI):

ChatGPT omitted the explanatory paragraph below “** Actions **” altogether – as well as the word “visible” and the sentence in parentheses (“I presume this means …”).

The prompt we used in no way implied that the translation should ignore parts which can be understood as the original poster’s comments. In all likelihood, the AI deemed them unimportant; however, such a strategy, particularly for phrases longer than one or two words, is unacceptable in the localisation industry. There doesn’t seem to exist a reliable way to avoid similar errors without, at least, editing and proofreading done by people fluent in both the source and target language. If we asked ChatGPT to translate into a language that employs a script unfamiliar to us, we would have no clue that large portions of the text went missing. Also, as we noticed earlier, AI revision doesn’t guarantee correcting all errors.

How to verify the quality of AI-generated translation

The analysed examples of AI translation suggest one thing: a specialised linguist is needed to evaluate its quality. Their objective is to approach the text the same way as a human-made translation – at the end of the day, while not always possible, the end result should be indistinguishable from content created from scratch by a native speaker.

Granted, there may be limited situations in which unreviewed AI-generated translations are perhaps acceptable. For example, if we run an e-store and wish to sell our products abroad, sometimes we may translate their names and/or descriptions using AI. Usually, this type of content only serves a complementary function when compared to product specification or photos – so it might not be the most crucial sales-wise. Still, we can’t assume that AI won’t hallucinate a product name so that, for example, “pen” is translated as an enclosure instead of a biro – or even something completely unrelated.

It must also always be borne in mind that unnatural phrases or meaning-altering errors can discourage a lead. And as we’re already aware, lack of target language proficiency makes it impossible to discern whether a generated translation contains such flaws. By itself, artificial intelligence won’t realise that an increase from 200 kg up to 1.15 t is much more than 33% – a human is likely to give it a second thought.

Wishing to keep your brand’s natural voice in the age of AI? Get in touch with our experts!

Leave a Reply